Why Building on General AI Tools Is Not the Same as a Document AI Platform

General AI tools are impressive. That is exactly why so many teams start there.

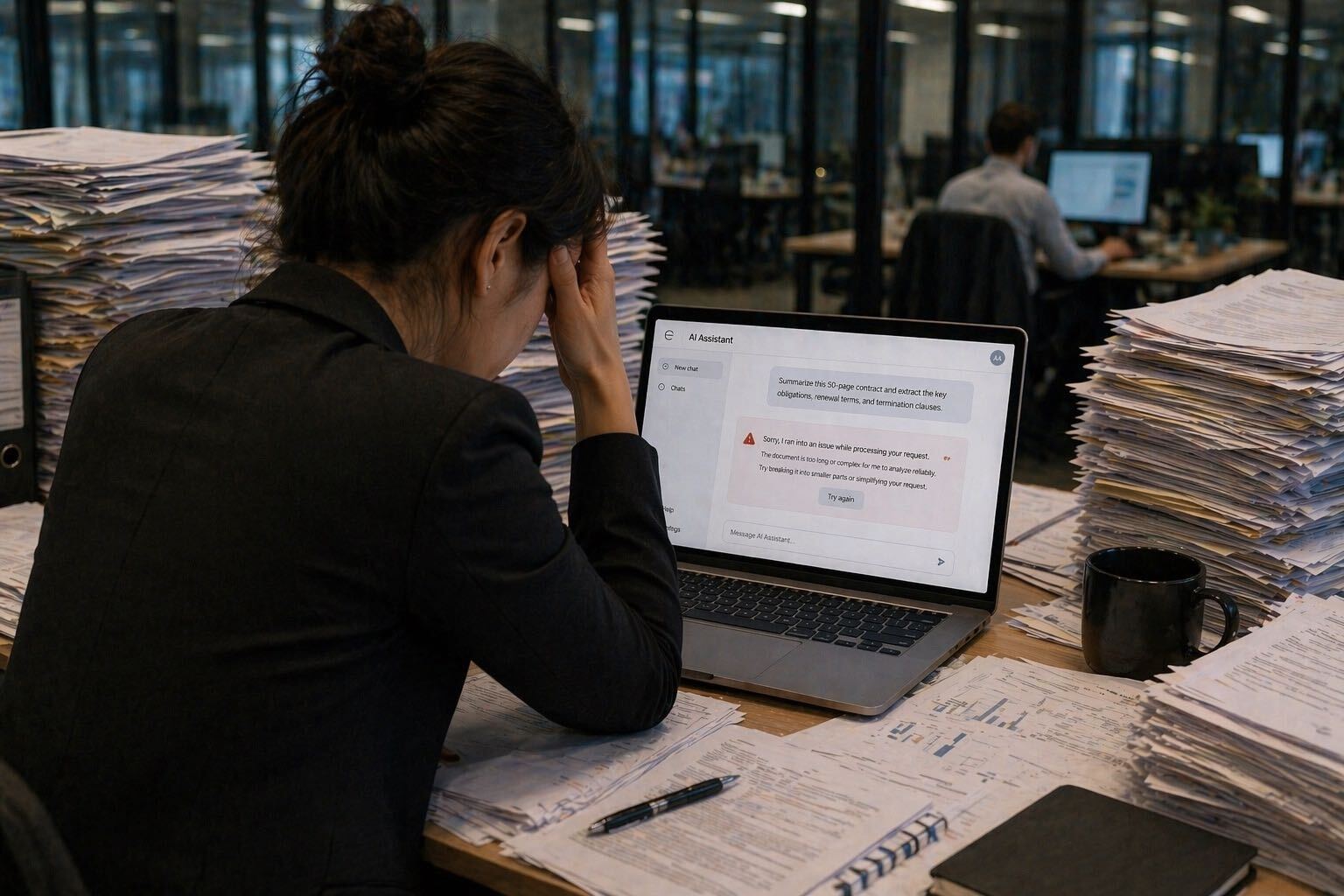

A user uploads a document, asks a question, and gets an answer in seconds. For brainstorming, drafting, summarizing, and lightweight knowledge work, that can feel almost magical. It is easy to see why business teams look at those experiences and think: why not use the same tools for claims, underwriting, audits, compliance reviews, or any other document-heavy process?

That instinct is understandable. It is also where many teams go wrong.

The issue is not that general AI tools are bad. They are not. They are often useful, flexible, and increasingly important across the enterprise. The issue is that there is a major difference between a tool that can talk about a document and a platform that can reliably run a document workflow. Those are not the same thing. And when the work is high volume, high stakes, and built around large, messy files, the gap becomes impossible to ignore.

The biggest mistake many buyers make is assuming consumer-grade AI tools are actually reading every page of the documents they upload. In many cases, they are not.

As Brad Schneider, CEO of Nomad Data, puts it:

“The single biggest mistake buyers make is thinking that these consumer-grade off-the-shelf tools are actually reading every page of the documents that they upload. They absolutely are not.”

That quote gets to the heart of the problem. People see a polished answer and assume the system did a thorough job. But polished does not always mean complete. Confident does not always mean accurate. And in document-heavy operations, those differences matter a lot.

Below is the real distinction between general AI tools and a document AI platform, why it matters, and what buyers should ask before they trust an AI system with critical work.

The appeal of general AI tools

General AI tools are attractive because they remove friction.

You do not need a large implementation project to get started. You do not need to define a workflow in detail on day one. You do not need a technical team just to test whether AI can help. Instead, you upload a file, ask a question, and get something back immediately. That instant feedback loop is powerful. It creates momentum, curiosity, and excitement across teams.

For many use cases, that is exactly the right experience.

General AI tools are excellent for early exploration. They are useful for idea generation, first-pass summarization, writing support, and getting oriented quickly in a body of information. In that context, they can save time and help users feel more productive almost immediately.

That is why so many document-heavy teams are tempted to start there. If a general AI tool can answer questions about a meeting transcript, summarize a report, or pull highlights from a policy memo, why would it not also work for a claim file, an underwriting submission, or a large audit packet?

Because those tasks may look similar on the surface, but they are fundamentally different underneath.

A lightweight knowledge task is not the same as an operational workflow. A one-off question is not the same as a repeatable process. A document that is clean, short, and text-based is not the same as a messy, multi-format file that drives an important business decision.

Still, the appeal is real. General AI tools promise speed, low effort, and instant access to answers. That combination is hard to resist, especially for organizations under pressure to move quickly on AI.

The problem is what happens after the first demo.

Where general AI tools break down on document-heavy work

General AI tools were designed to serve many different needs for many different users. That broad usefulness is their strength. It is also their limitation.

When the task becomes document-heavy, repetitive, and high stakes, the weaknesses start to show.

The first problem is shallow retrieval. Many users assume that when they upload a long document set, the system fully reads and reasons over every page. In reality, that is often not how these tools behave. Because they are built to serve huge user bases at low price points, they are typically optimized for responsiveness and scale, not exhaustive review of large and complex files. That can mean partial context, selective retrieval, or summarization shortcuts that sound complete even when key information was missed.

That is dangerous in real business workflows.

The second problem is that not all documents should be handled the same way. A clean digital memo is one thing. A scanned medical packet is another. A financial report with neat tables differs from a concatenated PDF with repeated page numbers, handwritten notes, visual marks, and inconsistent formatting. A document platform has to know the difference. Most general AI tools do not provide that level of control.

Even the earliest step, digitization, can go wrong. If the system cannot correctly interpret tables, forms, handwriting, checkboxes, or visual selections, the errors start before the actual reasoning step even begins. By the time the user asks a question, the input may already be incomplete or distorted.

Then there is the issue of how the same document should be read differently depending on the task.

Imagine a 500-page file where each page contains one lease. If the goal is to produce a table of every lease, pushing the entire document into one giant context window may be the wrong approach. Too much similar information in one pass can lead to omissions or muddled outputs. In that case, a better method may be to process each page sequentially, extract the relevant fields one by one, and build the output in a structured way. That requires workflow logic, retrieval control, and awareness of the document structure before the job even starts.

General tools usually do not give users that level of precision.

The output problem comes next. One of the most common complaints from buyers is inconsistency. Run the same task several times in a general AI tool and the answers may vary from run to run. Sometimes the differences are small. Sometimes they are material. Either way, that unpredictability creates friction.

Repeatability is not a nice-to-have in document operations. It is the foundation.

If a team is reviewing files every day and producing outputs that feed other business processes, the system has to behave in a stable way. It has to look in the same places, apply the same rules, and return outputs in the same format. It cannot depend on whether one user wrote a better prompt than another. It cannot rely on luck.

The final problem is confidence without traceability. General AI tools are often very good at producing answers that sound polished and decisive. But when the user cannot clearly see where the answer came from, how it was derived, or whether something important was missed, the polish becomes a liability. A confident answer without explainability can create more risk than a slower system that shows its work.

Why bad answers are a real business risk

This is not just an AI quality issue. It is a business risk issue.

When a general AI tool gives a weak answer in a casual setting, the consequences are limited. Maybe a summary is a little off. Maybe a draft email needs edits. Maybe a user asks the question again in a different way.

That is not what happens in document-heavy business workflows.

In claims, for example, one missed fact can affect a decision, delay resolution, or lead to avoidable cost. In underwriting, a shallow read can weaken risk selection or cause important exclusions, inconsistencies, or loss indicators to be overlooked. In compliance, a vague answer with no traceability can create exposure. In audits, inconsistent outputs make review harder and reduce confidence in the system. In any workflow involving regulated decisions, financial consequences, or customer impact, incomplete answers are not just annoying. They are operationally dangerous.

The risk compounds because document workflows tend to be repetitive and high volume. A bad answer is rarely a one-time event. If the system is wrong in a consistent way, the problem can spread across dozens, hundreds, or thousands of files before anyone notices. That means mistakes scale with the tool.

There is also a hidden cost that shows up before any measurable business error: teams lose trust.

This matters more than many buyers realize. When people use a general AI tool for a specialized document task and get inconsistent or unreliable results, they often conclude that the task itself cannot be solved with AI. That conclusion is usually wrong. The issue is not that AI cannot support the workflow. The issue is that the wrong kind of AI was used.

As Schneider explains:

“Once teams see inconsistent outputs, they just immediately lose trust, not only in that tool, but in artificial intelligence in general.”

That is the real tragedy. A poor experience with a general-purpose tool can shut down the right AI initiative for the wrong reason.

Why building on top of general AI tools does not solve the core problem

A common response is: fine, we will just build something on top of a general model.

On paper, that sounds sensible. Wrap a workflow around ChatGPT or Claude. Add prompts. Add routing logic. Add a few scripts. Put a simple interface on top. Now it is enterprise-ready, right?

Not really.

A wrapper is not the same as a platform. Better prompts do not solve structural issues with document ingestion, retrieval depth, output consistency, or auditability. Glue code does not magically create repeatability. And a dashboard does not turn a general-purpose model into a production-grade document system.

This is where many teams learn the difference between a proof of concept and a real operating system for document work.

A demo is easy. Production is hard.

Production means handling the long tail of real-world document problems. It means managing scans, forms, inconsistent layouts, concatenated files, charts, tables, handwritten marks, and low-quality images. It means determining how different document types should be processed for different tasks. It means regression testing workflows so they do not break when models change. It means managing structured outputs and exceptions. It means deciding what the system should do when confidence is low or the source material is ambiguous.

It also means maintaining that system over time.

That is where the cost of building on general AI tools starts to rise. The first prototype may come together quickly. The long-term ownership burden does not. Teams often discover that they are spending more and more time maintaining prompts, adjusting workarounds, monitoring results, and supporting users who are unsure how to get the right output.

For most companies, that is not the business they are in.

Unless document AI is your product, building and maintaining an entire document intelligence layer internally is usually a distraction from core priorities. The cost is not only engineering time. It is also operational overhead, business-user frustration, and the opportunity cost of relying on a fragile system for critical work.

The illusion of “more advanced” general AI products

Some buyers realize the limitations of consumer-style chat tools and move toward more enterprise-looking general AI products. These may include stronger collaboration features, shared workspaces, team workflows, or coworker-style interfaces. On the surface, that can make them feel closer to a real operational solution.

But better UX is not the same as better document intelligence.

A more polished collaboration layer does not fix the underlying challenge of reliably extracting, verifying, and structuring information from large, messy document sets. A nicer chat interface does not guarantee deep retrieval. Shared prompts do not ensure repeatability. Team features do not solve for citations, audit trails, or workflow-specific document handling.

This is where buyers can get fooled by maturity signaling.

A product may look more enterprise-ready because it has permissions, collaboration, and better packaging. But if the core system still handles the same workflow differently from run to run, still lacks fine-grained control over how documents are processed, and still depends on general-purpose retrieval methods, then the main problem remains unsolved.

In other words, the wrapper changed. The workflow problem did not.

That matters because the failure mode can be harder to spot. A more advanced-looking general AI product may inspire more confidence internally, which means teams may trust it with more critical work before realizing it is not truly built for the job. That increases risk, not reduces it.

What a real document AI platform does differently

A real document AI platform starts from a different premise: document work is a workflow, not a chat session.

That shift changes everything.

- It treats ingestion seriously.

Real-world files are messy. They include scans, emails, attachments, forms, tables, low-quality images, repeated page numbering, handwriting, stamps, and inconsistent structures. A document platform is built to ingest and interpret those materials accurately, rather than assuming every file is a clean text document.

- It uses stronger OCR and parsing.

Clean text extraction is not enough when critical data may live in table cells, visual annotations, circled selections, or awkward layouts. The platform has to handle the complexity that actual operations generate.

- It controls retrieval.

Instead of hoping the model found the right snippet, a document AI platform can determine how a file should be read based on the workflow. One task may require exhaustive page-by-page review. Another may require cross-document comparison. Another may require extracting fields into a structured schema. The system should support the right method for the job, not force every document task into the same generic pattern.

- It supports structured outputs.

Operational workflows often need more than a paragraph. They need a CSV, a checklist, a table, a report in a defined format, or fields that feed downstream systems. A document platform produces outputs that can be used, not just read.

- It provides citations and traceability.

Users need to see where answers came from, ideally down to the exact document and page. That supports validation, trust, and audit readiness.

- It is repeatable.

The same task should be handled the same way every time, across users and over time. That is how you turn AI from an interesting assistant into a dependable business process.

- It is auditable.

If a question comes up later, the organization should be able to understand how the output was produced, what source material supported it, and what the system did when confidence was low.

- It is designed for scale.

Not just model scale, but workflow scale. That means large volumes of files, repeated tasks, changing inputs, and real operational pressure.

Why Doc Chat is better for this problem

Doc Chat was built specifically for high-volume, high-stakes document analysis. That matters because it was designed around the reality of document workflows, not the assumptions of general-purpose chat.

Instead of asking users to reinvent the task with prompts every time, Doc Chat is built to support repeatable workflows. The goal is not just to answer a question once. It is to help teams get accurate, explainable, consistent answers again and again across large document sets.

That includes the capabilities that matter most in production: handling messy files, supporting strong OCR and parsing, controlling how documents are read, producing structured outputs, and citing the exact source material so users can verify the answer themselves.

It also includes something buyers often underestimate: ownership.

With a self-serve general AI tool, every user is largely on their own. If results are inconsistent or wrong, the usual advice is to tweak the prompt and try again. That may be tolerable for casual usage. It is not acceptable for mission-critical workflows.

With Doc Chat, the customer does not need to be an expert in prompt engineering or model behavior. They explain the business process. Nomad helps tune the workflow. If the output needs to change, the workflow changes. If there is an issue, there is one team to call. That white-glove support is not a side feature. It is part of what makes the system usable in production.

Most importantly, Doc Chat is built for the kind of work general AI tools struggle with most: long, complex, document-heavy workflows where completeness, consistency, and traceability matter.

What buyers should ask before choosing an AI solution

Buyers evaluating AI for document-heavy work should move past the surface-level question of whether a tool seems smart. The better question is whether it can support the actual workflow.

Here are the questions that matter:

- Can it show exactly where each answer came from?

If the system cannot point to the source document and page, users cannot validate the result.

- Can it handle thousands of pages and messy document sets?

A solution that works on short, clean files may break on real-world materials.

- Is the output consistent across runs and across users?

If the same workflow gives different answers depending on who asked or how they asked, it is not operationally reliable.

- Can the workflow be defined in advance?

Business processes should not depend on every user writing the perfect prompt each time.

- Can it produce structured outputs?

A nice paragraph is not enough if the downstream need is a checklist, table, report, or system-ready format.

- Can it abstain, escalate, or flag ambiguity?

Reliable systems should know when not to pretend.

- Can it be audited later?

In high-stakes workflows, organizations need to understand how outputs were generated and what sources supported them.

- Who owns fixing issues when something goes wrong?

This may be the most practical question of all. If a workflow breaks, is there a real team accountable for making it work?

These are the questions that separate general AI curiosity from document AI readiness.

The cost of using the wrong tool

General AI tools absolutely have a place in the enterprise. They are useful, powerful, and often worth adopting for the right kinds of work.

But critical document workflows are not where teams should confuse broad usefulness with fit for purpose.

When the job involves high volumes of messy files, repeated processes, regulated environments, or decisions that carry real business consequences, the difference between general AI and document AI becomes very clear. One may sound smart. The other is built to work.

That is the real buying decision.

It is not “should we use AI?”

It is “what kind of AI can actually support the way this work gets done?”

The real choice is not between old software and modern AI. It is between general AI that sounds smart and document AI that is built to actually work.

FAQs

General AI tools are broad-purpose systems designed for many kinds of tasks, from drafting to summarization to brainstorming. A document AI platform is purpose-built to run document workflows reliably, especially when files are long, messy, high volume, and tied to real business decisions.

Because polished answers can hide shallow retrieval, missed details, inconsistent outputs, and weak traceability. In high-stakes workflows, one missed fact can lead to bad decisions, compliance issues, or loss of trust.

You can build a proof of concept, but that does not automatically solve the hard parts of document AI. Teams still need to manage ingestion, OCR, retrieval, structured outputs, consistency, auditability, maintenance, and long-term support.

Not necessarily. Better collaboration features or a nicer interface do not solve the underlying challenge of extracting reliable answers from large, complex document sets. Enterprise packaging is not the same as workflow control.

Look for strong OCR, purpose-built ingestion, controlled retrieval, structured outputs, source citations, repeatability, auditability, and clear ownership when something needs to be changed or fixed.

Citations let users verify the answer against the original source. That is essential for trust, especially when the source material spans hundreds or thousands of pages.

Any document-heavy manual human workflow can benefit from a robust document AI platform. Specifically claims review, underwriting, audits, compliance reviews, contract review, medical document review, financial analysis and any other process where a missed detail can create cost, risk, or delay.

Doc Chat is designed specifically for high-volume, high-stakes document analysis. It focuses on accurate, explainable, repeatable outputs from complex document sets, rather than relying on a general-purpose chat experience and hoping it works.